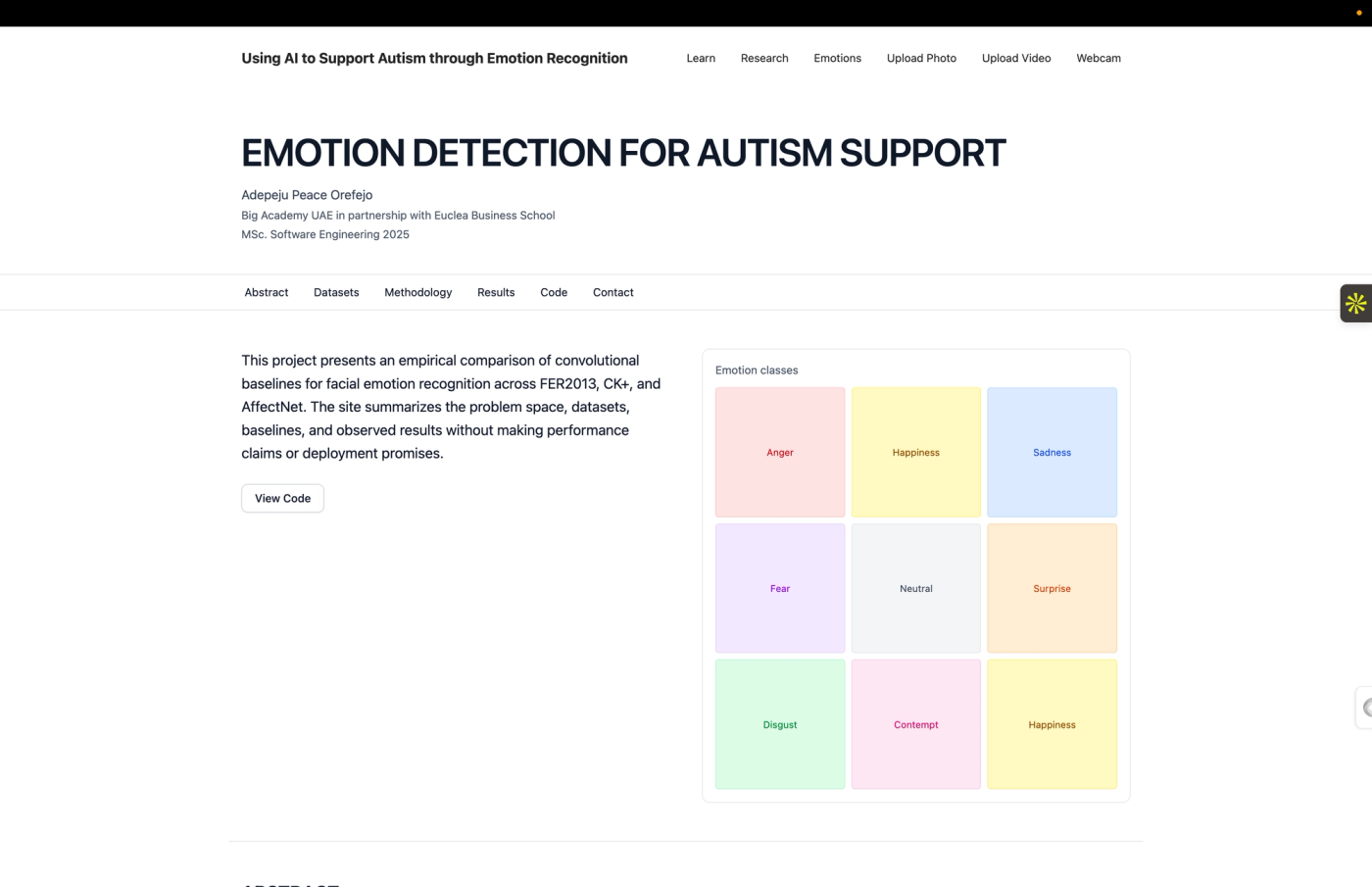

Emotion Detection for Autism Support

A CNN-based facial emotion recognition system trained on FER2013, CK+, and AffectNet datasets, with D3.js sunburst visualisations representing emotional states.

My MSc thesis project at Big Academy UAE / Euclea Business School. The research side trains and compares three CNN models from scratch — one on FER2013, one on CK+, one on AffectNet — to measure how well each generalises across datasets. No transfer learning, no pretrained weights, just standard convolutional architectures to establish honest baselines.

The applied side is a full-stack web app. Upload a photo, point your webcam, or drop a video, and the system detects faces and classifies emotions in real time. The backend runs on FastAPI with OpenCV for face detection and TensorFlow for inference. The frontend is a React app with pages for each input mode, a research overview, and an interactive emotions reference.

The point wasn't to beat state-of-the-art benchmarks — it was to understand where simple models break down across different data conditions, and to build something usable that demonstrates the findings.

highlights

- Full research pipeline: preprocessing, training, confusion matrices, per-class metrics, and visualisation

- React frontend with interactive emotions reference, research overview, and multiple analysis modes

- FastAPI backend with OpenCV face detection and TensorFlow inference, deployed on Render

- Live web app with webcam, photo upload, and video analysis — faces detected and emotions classified in real time

- Three CNNs trained from scratch on FER2013 (59%), CK+ (100%), and AffectNet (62.5%) with cross-dataset evaluation

What this is

My MSc thesis at Big Academy UAE in partnership with Euclea Business School. The question: how well do simple CNNs trained from scratch handle facial emotion recognition, and where do they fall apart when you test them across different datasets?

Alongside the research, I built a web app that puts the trained models behind an API so anyone can test them with their own face — via webcam, photo upload, or video.

The research

I trained three separate CNN models, each on a different dataset:

- FER2013 — 35k grayscale images scraped from the internet. Noisy, mislabeled, realistic. The model hit 59% accuracy.

- CK+ — lab-controlled posed expressions. Clean and small. 100% accuracy, which says more about the dataset than the model.

- AffectNet — large-scale, in-the-wild images with 8 emotion classes. 62.5% accuracy.

Each model was then evaluated on all three datasets to measure cross-dataset generalisation. The interesting finding is how badly models trained on clean data (CK+) perform on messy real-world images, and vice versa.

No transfer learning, no pretrained backbones. The goal was to establish baselines, not to chase leaderboard scores.

The app

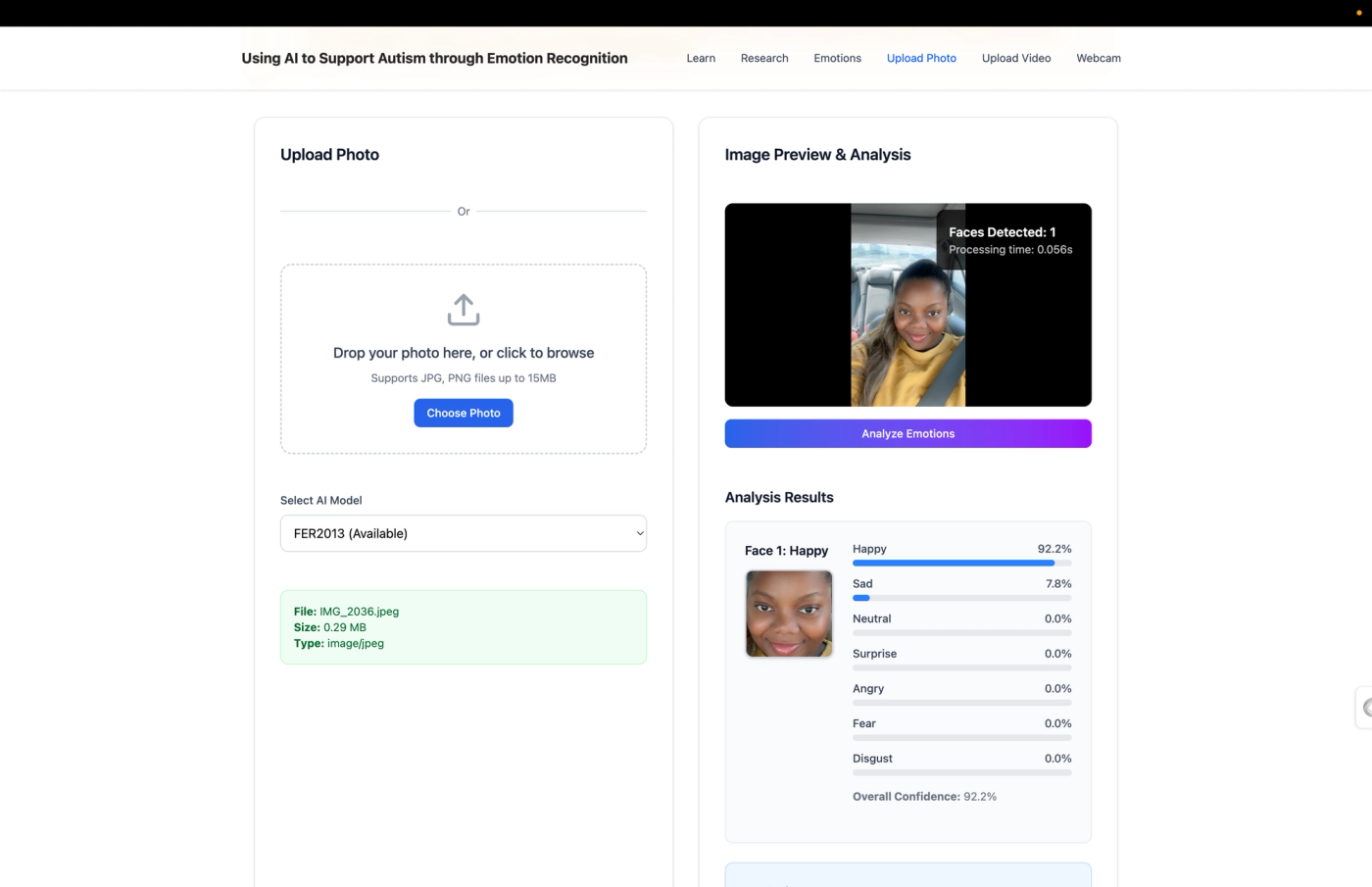

The research is more useful if people can actually interact with it, so I built a full-stack web app:

- Webcam mode — live face detection and emotion classification in the browser

- Photo upload — drop an image, get per-face emotion predictions with confidence scores

- Video analysis — process a video file frame by frame

- Emotions reference — an interactive page explaining each emotion class with definitions

- Research overview — the datasets, methodology, and results presented accessibly

The frontend is React with Tailwind and shadcn/ui. The backend is FastAPI serving the TensorFlow models, with OpenCV handling face detection. Deployed on Render.

How inference works

When an image hits the /api/predict endpoint:

- OpenCV's Haar cascade detects face bounding boxes

- Each face is cropped, converted to grayscale, resized to 48×48

- The selected model runs inference and returns a probability distribution across emotion classes

- The API sends back bounding boxes, labels, confidence scores, and cropped face thumbnails

It's not fast enough for real-time video at high resolution, but for single images and webcam snapshots it's responsive enough to feel instant.

What I learned

The biggest takeaway is that dataset quality matters more than model complexity. A simple CNN on clean data looks perfect; the same architecture on noisy real-world data struggles. Cross-dataset evaluation is the only honest way to measure how well a model actually understands emotions versus how well it memorised a specific dataset's quirks.